AI Research Agents Compared: OpenAI, Gemini, Grok, and Perplexity

The Rise of AI Research Agents (and Why They Matter)

Good morning everyone!

LLMs like ChatGPT and Gemini handle lots of everyday tasks . Summarizing documents, brainstorming ideas, answering customer queries and much more.

But these tools fall short when you need real depth, multi-step analysis, complex synthesis, and actual research.

The solution? AI research agents. Hybrids that merge conversational AI with autonomous web browsing, tool integrations, multi-step reasoning and multi-step actions.

Yes, those already exist and they are real working and useful agents. Unlike chatbots, they don’t just react; they think (in a mechanical, step-by-step way, not in a “developing sentience” way). They break down problems, gather data, and analyze it like a junior research assistant with unlimited energy but perhaps still questionable judgment.

These AI Research Agents rather confusingly have been branded with the same name “Deep Research” across many leading AI players whether its OpenAI, X.AI, Perplexity or Gemini.

Let's demystify, compare and learn to best leverage all these.

But first, another important aspect anyone in AI needs to know to use and build such products is how to optimize them, which can only be done through building proper evaluations. This leads to the sponsor of this iteration, who has created an amazing course just for that.

1️⃣ (Sponsor) From Guesswork to Growth: The Analyze → Measure → Improve Loop for LLMs

When building with LLMs, if you’re not measuring, you’re guessing. Here’s how I show clients we’re delivering real value.

It builds trust because it’s measurable and clear. The secret is, with no surprise, solid evaluations.

LLM apps usually fail for three simple reasons:

You are not looking at real data and outputs,

“Good” isn’t defined tightly. The prompt/spec leaves room for interpretation.

Even with a tight spec, models misapply it on new inputs, e.g., it sees “Elon Musk” in the body and extracts him as the sender.

If you don’t evaluate, you just tweak models and prompts forever, repeating the same mistakes and changing from one local minima to another.

The highest-ROI move is to analyze the errors of your system.

Read real traces, the full story: user input, intermediate LLM calls, tools, final answer. Don’t guess; look. It allows our team to know what needs to be worked on and where to go next.

The next step is to build custom evaluations that reflect your product. You can’t just use online benchmarks for that.

Start simple with code checks: JSON validity, tool errors, or schema constraints. Fast, cheap, and deterministic so you don’t have to be in the loop for every check.

Make a proper dataset and treat your judge like a model. It’s just as important! Evaluate your retrieval, context and generation.

For subjective stuff, you still want to evaluate those! Use LLM-as-judge, but calibrate it with your expert’s knowledge!

If you want a complete, application-centric system, not relying on vibes, the best resource I’ve found is the course by Hamel Husain (with Shreya Shankar).

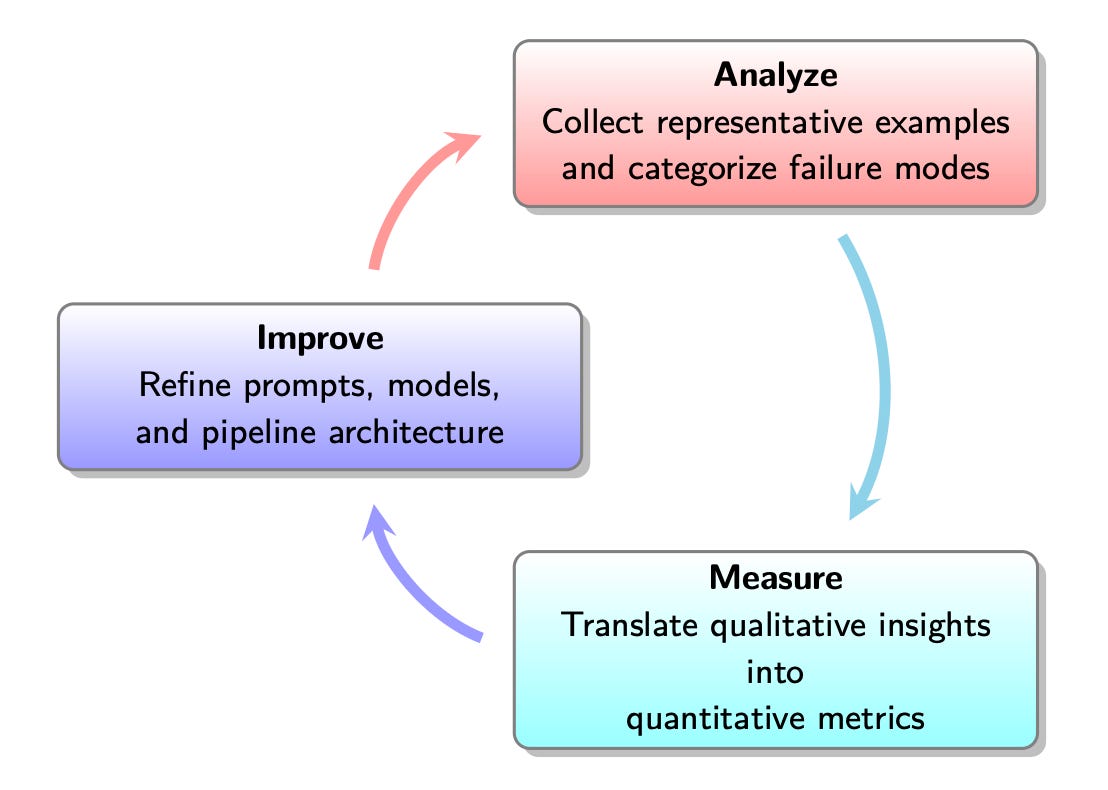

Their course turns this whole process into a repeatable Analyze → Measure → Improve loop with hands-on practice.

If you're interested, I've got a 35% discount on the course here: https://maven.com/parlance-labs/evals?promoCode=whatsai-louis

2️⃣ OpenAI’s $200/Month Research Agent: Is It Worth It?

A prominent example is OpenAI’s Deep Research, built on its o3 model, which excels at tackling detailed research tasks.

This isn’t just a chatbot spitting out text. It actively investigates, often taking 5 to 30 minutes to pull together detailed, citation-backed reports. That’s a long time in AI terms and it can cost a lot in tokens used, but the payoff is depth and reliability (well, mostly — LLMs still hallucinate, and we’ll get to that).

Designed for professionals, these agents streamline workflows, whether it’s conducting market analysis, reviewing literature, or synthesizing regulatory data by providing comprehensive, citation-backed results.

So how can professionals like us make use of these Agents? Watch the video (or read the article version here):

3️⃣🚀 Ready to actually use AI at work—without needing to code?

If you’re a business professional who wants to lead with AI instead of watching from the sidelines, this course is for you. You’ll learn how to:

Use tools like ChatGPT, Claude, Gemini, and Perplexity effectively

Master prompting, reasoning, and no-code workflows

Automate research, writing, analysis, and team tasks

Create custom AI solutions that save hours every week

Drive smart, strategic AI adoption in your team or company

👉 Join the July cohort for our kick-off call of the “AI for Business Professionals” course here: https://academy.towardsai.net/courses/ai-business-professionals?ref=1f9b29

No technical background needed. Just real-world AI skills designed to boost your output, leadership, and career.

And that's it for this iteration! I'm incredibly grateful that the What's AI newsletter is now read by over 30,000 incredible human beings. Click here to share this iteration with a friend if you learned something new!

Looking for more cool AI stuff? 👇

Looking for AI news, code, learning resources, papers, memes, and more? Follow our weekly newsletter at Towards AI!

Looking to connect with other AI enthusiasts? Join the Discord community: Learn AI Together!

Want to share a product, event or course with my AI community? Reply directly to this email, or visit my Passionfroot profile to see my offers.

Thank you for reading, and I wish you a fantastic week! Be sure to have enough sleep and physical activities next week!

Louis-François Bouchard